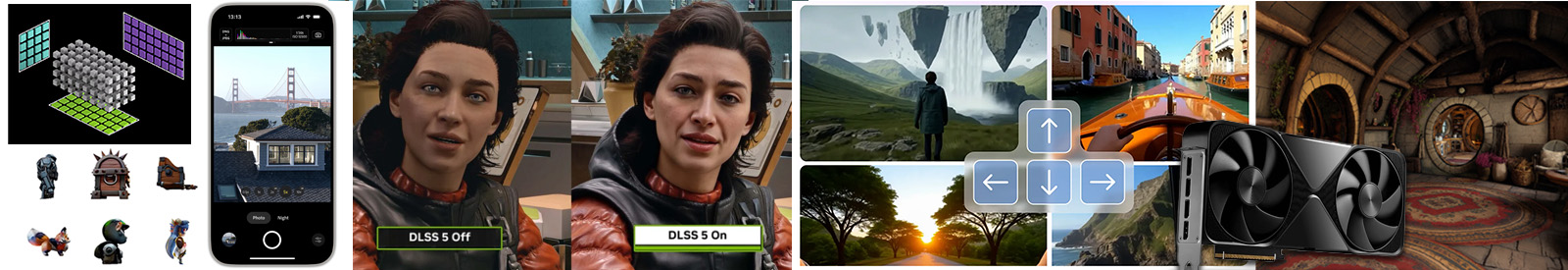

Visual and spatial computing tasks such as computational photography, 3D graphics, generative-AI based world creation (images, videos, 3D scenes, interactive world models), and embodied agentic AI are key responsibilities of modern computer systems ranging from sensor-rich smart phones, autonomous robots, and large datacenters. These workloads demand exceptional system efficiency. This course examines the key ideas, techniques, and challenges associated with the design of parallel, heterogeneous systems that accelerate visual and spatial computing applications. This course is intended for systems students interested in architecting efficient graphics and visual AI platforms (both new hardware architectures and domain-specific programming frameworks for these platforms) and for graphics, vision, robotics, and AI students that wish to understand throughput computing principles to design new algorithms that map efficiently to these platforms.

| Mar 31 |

|

|

Broad survey of course topics, why establishing goals and constraints is so important to clear systems design and thinking. A few in-class design exercises,

|

| Apr 02 |

|

|

Multi-shot alignment/merging, multi-scale processing with Gaussian and Laplacian pyramids, HDR (local tone mapping), advanced image understanding responsibilities of digital cameras (portrait mode, autofocus, etc)

|

| Apr 07 |

|

|

The Frankencamera, modern camera APIs, intro to Halide programming language

|

| Apr 09 |

|

|

Scheduling Image Processing Algorithms in Halide

Key optimization ideas, a detailed look at Halide's scheduling algebra

|

| Apr 14 |

|

|

Generating ML Kernels using LLMs + Domain Specific Languages for Targeting Tensor Cores

Trends in using LLMs to write efficient ML kernel code, motivation for DSLs like CuTile, Thunkerkittens, and Triton.

|

| Apr 16 |

|

|

The Design of Automatic Differentiation Primitives in Slang

Guest lecture by Yong He (NVIDIA). How the Slang language provides flexible support for auto-differentiation. Understanding the difference between mechanism and policy in system design.

|

| Apr 21 |

|

|

Designing 2D/3D Procedural Models for AutoDiff

Recovering 3D content with differentiable rendering, differentiable procedural modeling, design of programming abstractions to enable autodiff

|

| Apr 23 |

|

|

The Control/Realism Trade-Off in Visual Generative AI

The importance of predictable control in content creation. Techniques for inserting new forms of control into generative image synthesis, role of human-interpretable abstractions, neurosymbolic representations, The role of code as a representation for content.

|

| Apr 28 |

|

|

High-Performance Generative Video Models

Basic architecture of recent video models. Key tricks behind how generative video models achieve high interactive performance.

|

| Apr 30 |

|

|

Neural Postprocessing: Making Renderer's Output Look Photoreal using AI

Basics of techniques like DLSS. How the role of a modern and future renderer is to produce inputs for a final AI postprocessing pass.

|

| May 05 |

|

|

Generating 3D Objects and 3D Scenes

Techniques for generating complex 3D scenes. Generating code vs generating content. Direct 3D generation architectures, recovering 3D training data from image and video models.

|

| May 07 |

|

|

Introduction to World Models

Action-conditioned video models, non-pixel-based models, latent world representations, the challenge of maintaining long-term coherence, what applications is a world model good for anyway? (Entertainment? Training AI agents?)

|

| May 12 |

|

|

World Models (Part II)

Specific topics TBD

|

| May 14 |

|

|

Using World Models to Train Agents (and Robots)

Deeper look at the role of world models in training autonomous agents like robots.

|

| May 19 |

|

|

Building Blocks for 3D World Generation

Guest lecture by Ben Mildenhall and Justin Johnson (WorldLabs)

|

| May 21 |

|

|

How Generative AI is Being Used to Create Interactive Worlds at Roblox

Guest lecture by Kiran Bhat (Roblox)

|

| May 26 |

|

|

LLM-Based Embodied Agents for Games and Virtual Worlds

LLM-based techniques for creating agents can learn skills in virtual worlds, techniques for using AI agents to simulate humans and human populations.

|

| May 28 |

|

|

High-Performance Simulators for Training AI Agents

Fast simulation platforms for training agents in virtual worlds, batch simulation concepts and challenges.

|

| Jun 02 |

|

|

Final Project Presentation Day

Have a great summer!

|

| Jun 4 | Term Project Information |