Back to Lecture Thumbnails

juliewang

hamood

Is there any situation where the cores are going to be unequal? Like on a multi-core system are all the cores always have the same storage capacity? If not, then does the GPU Work scheduler know this information and can optimize assigning tasks based on that information?

ccheng18

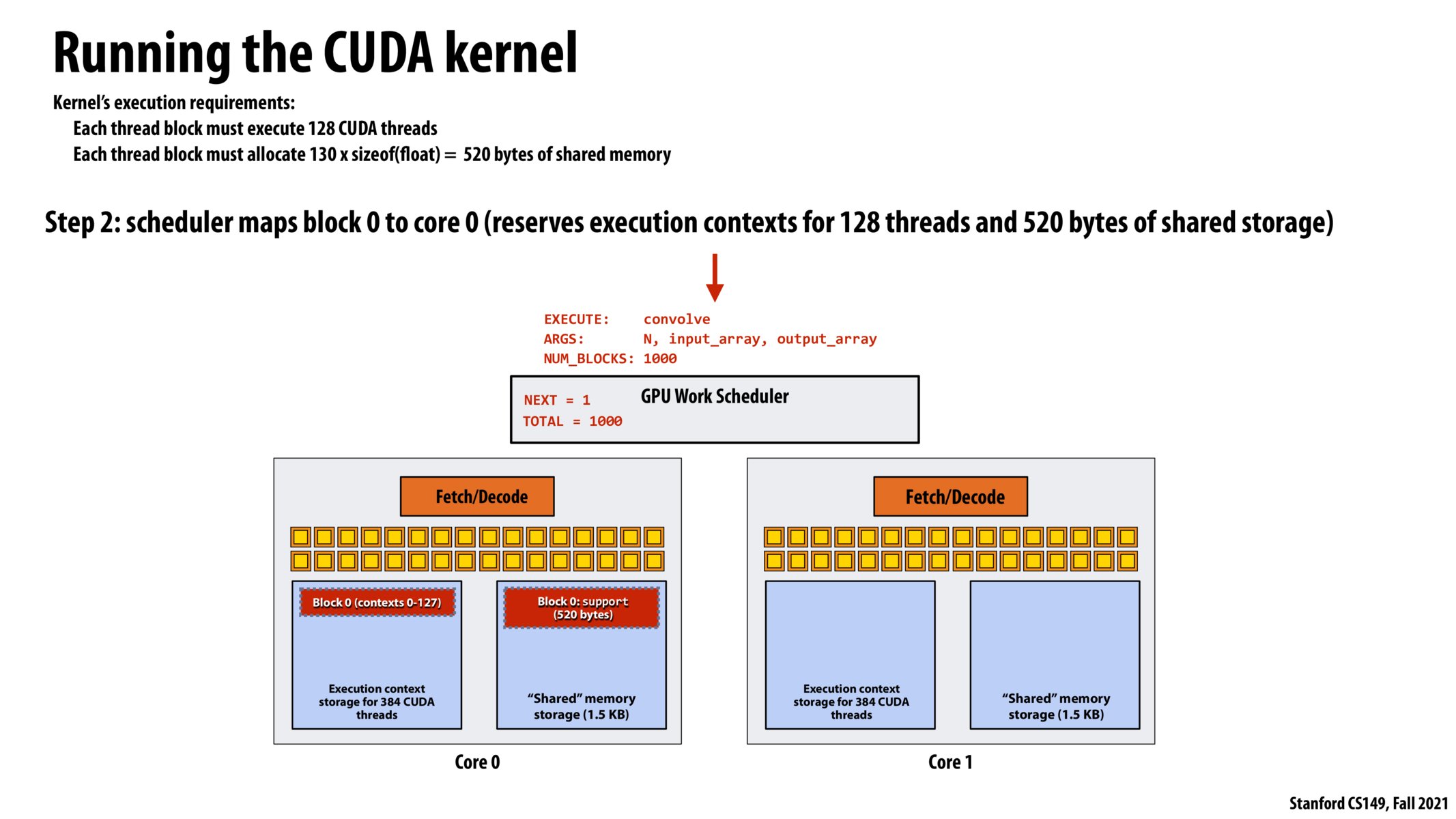

In class, you keep saying shared memory allocates 512 bytes of space, but the slides all say 520 -- is it 512 or 520?

ccheng18

What is the actual definition of an execution context?

Please log in to leave a comment.

Copyright 2021 Stanford University

What happens if the size of the thread block is not a multiple of the number of threads in a warp? E.g. If we have 120 threads in a block, would these get mapped to ceil(120/32) = 4 warps still?