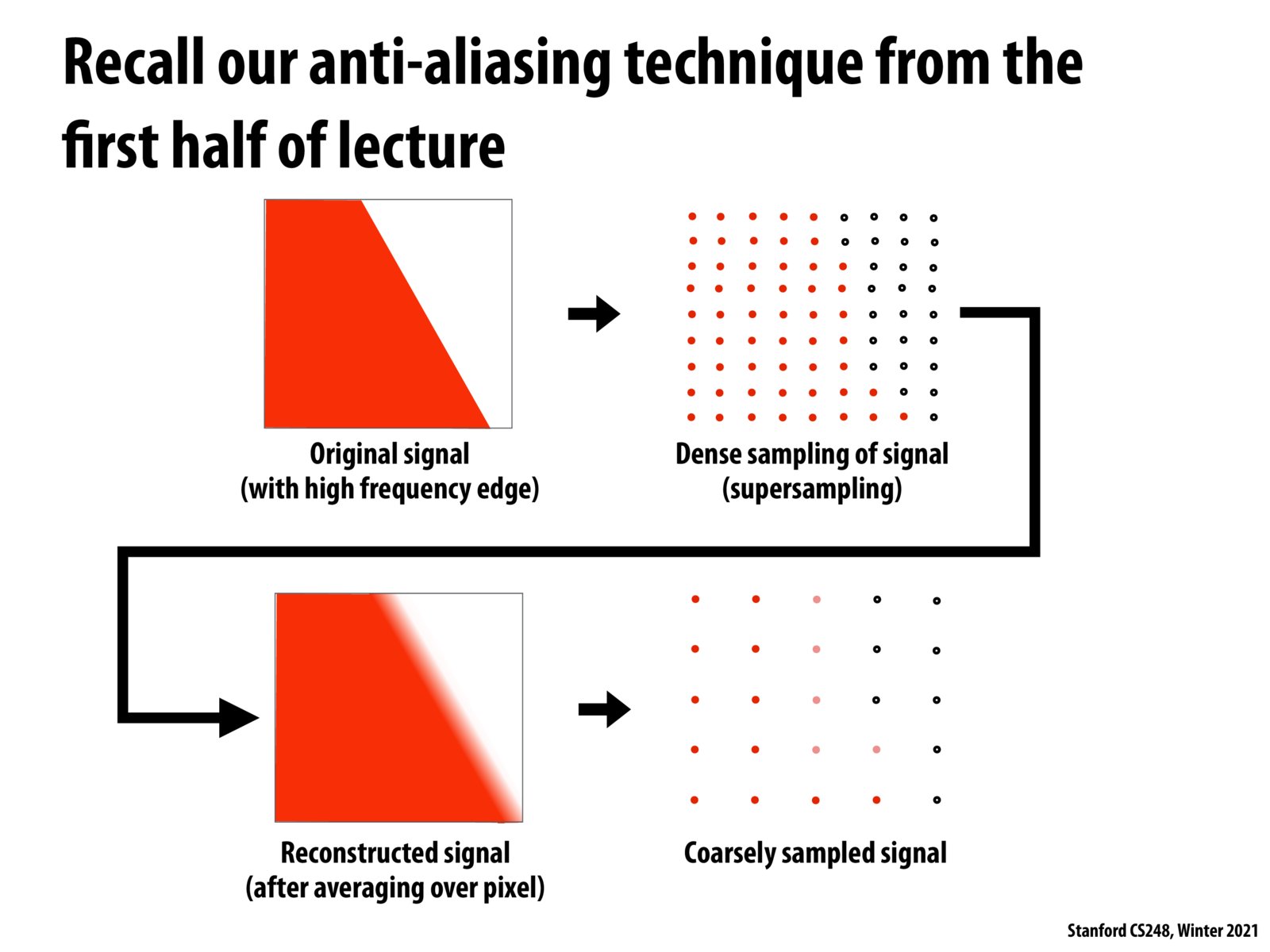

Here's my understanding but please correct me if I'm wrong. Imagine there's a truth representation of a triangle behind one screen pixel in a discrete format (although it should be continuous because we define the triangle with the three vertices)

"truth"

[2, 4, 8, 16, 32]

With dense sampling, let's say it samples one for every two values, the result is:

dense sample:

[2, 8, 32]

And with sparse sampling, eg. samples one out of five values, the result is:

sparse sample:

[8]

If you convert your sparse sample directly into a pixel, the value will be 8. But if you do dense sampling first, and then average the values into one pixel, you get (2 + 8 + 32) / 3 = 14. which is more close the truth representation of that pixel.

Please log in to leave a comment.

I'm a bit confused about how this works in practice — if you were to dense sample and return the average, then take a single sample per pixel of that, wouldn't you end up in the same place after just doing the dense sample?