I think something that initially confused me with false sharing was thinking that each thread has its own cache, and that would make me confused with how two threads can actually share a cache and create false sharing? I realized though that we're talking about threads that are on the same processor and not necessarily different processors. I think false sharing shouldn't occur between two different processors (correct me if I'm wrong on this) and that false sharing can only occur if the threads are being swapped on and off the same processor, and hence the same cache that is attached to that processor.

@derrick I don't think threads vs processors or having independent caches make a difference here. False sharing ultimately is a consequence of "two caches containing content from the same memory address". The main idea behind false sharing is the inadvertent copying of unused memory because the entire cache line is copied at once, as illustrated on the next slide.

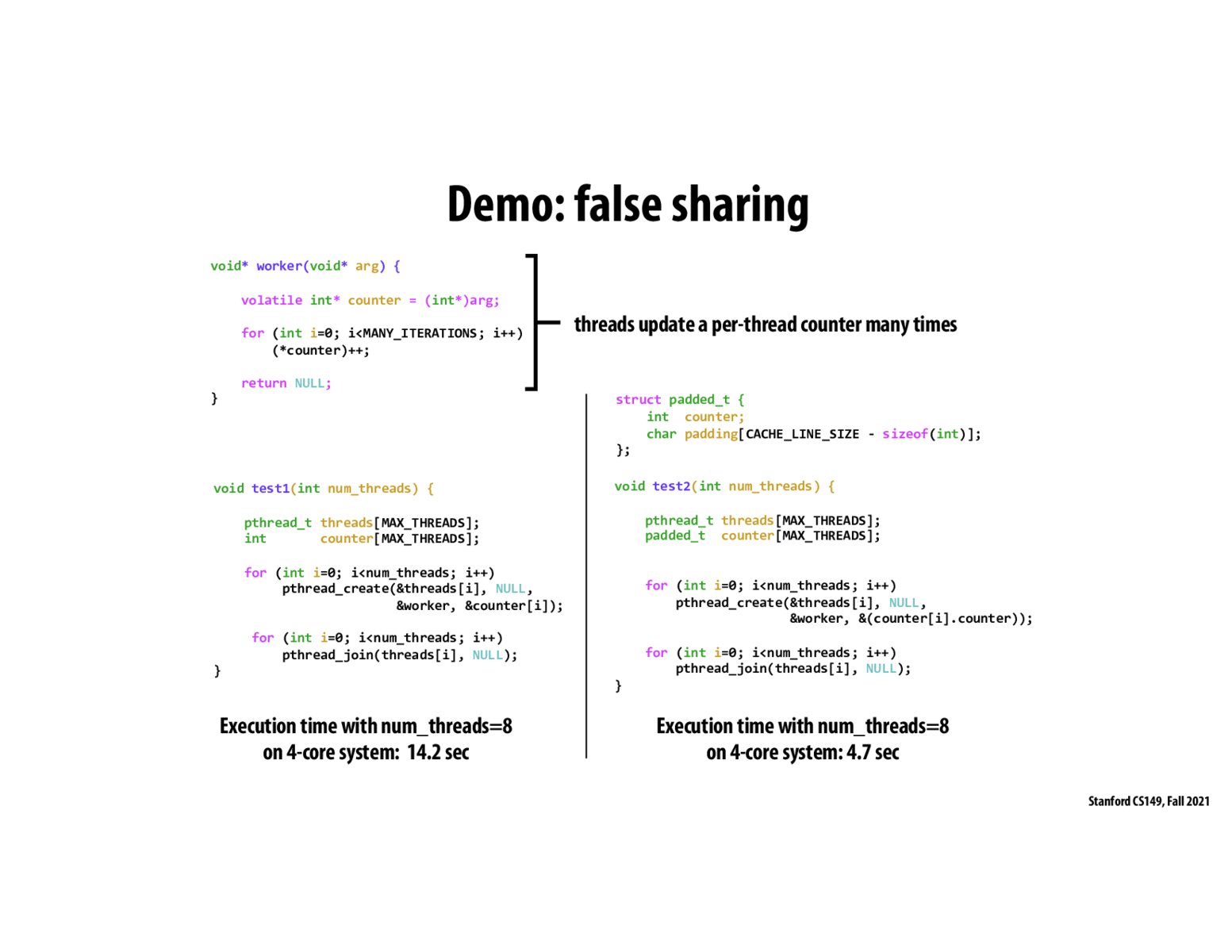

this is so counter intuitive that by wasting more resources we actually get a performance booster

In this case, would this issue be avoided if we avoid directly writing to global/shared data and use more per-thread private variables if possible

@ruvensl Not necessarily since the variables in this example are already essentially per-thread and it's possible that per-thread private variables could still be allocated next to each other anyway.

Please log in to leave a comment.

the big takeaway here is that for each thread, you want it to have its own cache line. Otherwise, you have a false sharing problem where you have to ping all the other caches to modify its state. when you pad and align, each thread has its own cache line so it doesn't need to tell anyone else (stays in the M state)